Now copy the data into the table you created above. Url='s3://gluebmcwalkerrowe/paphosWeather.csv'Ĭredentials=(aws_key_id='xxxxxxx' aws_secret_key='xxxxxxx') In fact, there is no place to look up your Snowflake credentials since they are the same as your Amazon IAM credentials-even if you are running it on a Snowflake instance, i.e., you did not install Snowflake locally on your EC2 servers.

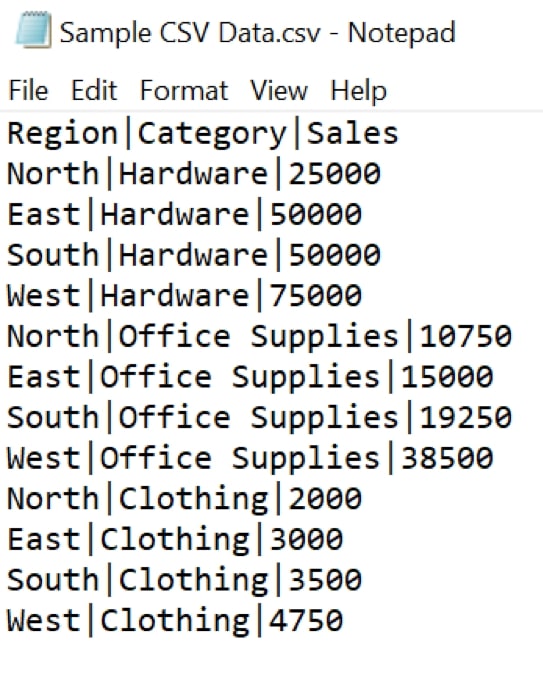

It does not matter what your snowflake credentials are. Then create a Snowflake stage area like this. (Presumably that is because they would prefer that you define the column data types, number precision, and size.) create table weather(ĭescription varchar(50)) Copy data file to Snowflake stage area Unfortunately, Snowflake does not read the header record and create the table for you. Upload this data to Amazon S3 like this: aws s3 cp paphosWeather.csv s3://gluebmcwalkerrowe/paphosWeather.csv Create table in Snowflake This is just a small subset of 1,000 records converted to CSV format.) (We purchased 20 years of weather data for Paphos, Cyprus, from OpenWeather. Download 1,000 weather records from here. You need some data to work through this example.

Use the right-hand menu to navigate.) Sample data (This article is part of our Snowflake Guide. We’ve also covered how to load JSON files to Snowflake. In this tutorials, we show how to load a CSV file from Amazon S3 to a Snowflake table.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed